INTRODUCTION

The swift progress of Unmanned Aerial Vehicles (UAVs), often referred to as drones, has reshaped numerous sectors such as surveillance, logistics, agriculture, cinematography, and disaster response. Although drones provide considerable advantages, their growing accessibility has also sparked security and privacy worries[1]. Unauthorised drone operations near airports, government facilities, industrial sites, and prohibited areas present possible risks like espionage, smuggling, and violations of airspace. These issues have generated an immediate demand for automated, precise, and real‑time drone detection solutions.

Conventional detection techniques, like radar‑based and acoustic‑based systems, frequently have difficulty handling tiny drone signatures, ambient noise, or cluttered scenes. Conversely, computer‑vision‑based methods have risen as an effective alternative because they can visually identify drones with ordinary cameras and contemporary deep‑learning algorithms. Within this category, the YOLO (You Only Look Once) model family has gained widespread popularity as it delivers rapid and precise object detection suitable for real‑time applications.

The aim of this project is to create a specialized drone detection solution employing YOLOv8, the newest and most performant version of the YOLO framework. Because labeled drone datasets are scarce, the work starts with just 15 pictures, which are methodically amplified via thorough data augmentation to produce a richer and more representative collection[2]. The enriched dataset markedly improves the model's capacity to detect drones across different lighting, viewpoints, sizes, and surrounding contexts.

The purpose of this work is twofold:

Develop a sturdy custom YOLOv8 model capable of detecting drones effectively despite limited training data.

Deploy the model within an interactive Streamlit application that enables drone detection on uploaded pictures, live webcam streams, and pre‑recorded video footage.

Through this project we demonstrate that contemporary deep learning models, combined with augmentation and a well‑designed pipeline, can accurately detect drones even when only minimal initial data is available. This study meets the rising demand for practical, lightweight, real‑time drone monitoring solutions applicable to security, defense, wildlife observation, and aerial threat management[3].

This work does not propose a novel detection architecture but focuses on the system-level integration, optimization, and real-time deployment of an existing state-of-the-art detector for airspace security applications.

LITERATURE REVIEW

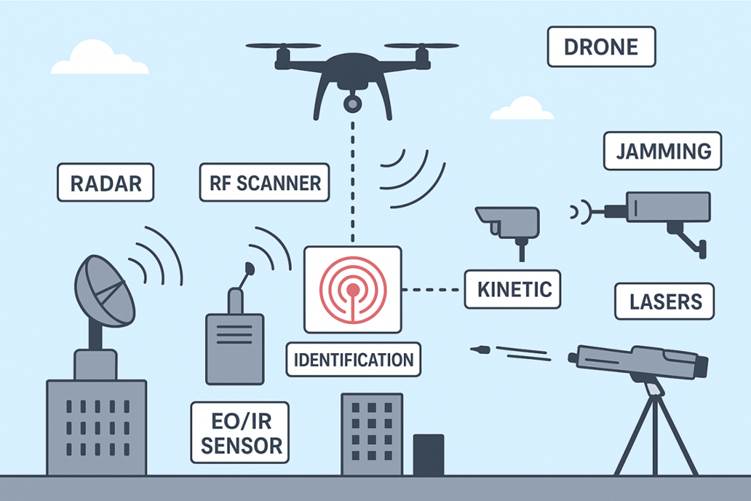

The detection and monitoring of Unmanned Aerial Vehicles (UAVs) has become an important research area due to the rise in civilian and military drone usage. Traditional drone detection systems have relied on radar, acoustic sensors, or radio frequency (RF) scanners, yet each of these methods presents notable limitations[4]. Radar-based techniques struggle to detect small drones with low radar cross-sections, especially in cluttered or urban environments. Acoustic detection systems depend heavily on noise patterns and are often affected by environmental factors such as wind or background noise. RF-based systems require the drone to emit a recognizable radio signature, which may not be present in autonomous or malicious drones. These limitations highlight the growing interest in computer vision–based approaches, which rely on visual data and deep learning models for effective drone detection.

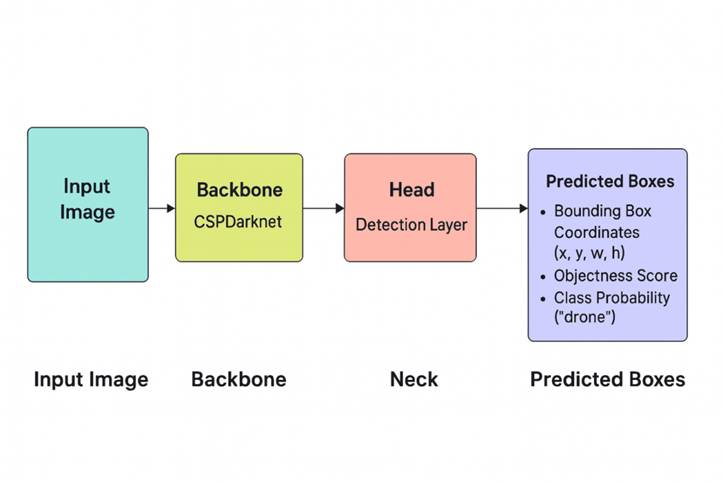

The advent of deep neural networks (DNNs) has led to considerable advancements in object detection. Initial architectures like R-CNN, Fast R-CNN, and Faster R-CNN delivered high accuracy yet demanded heavy computation, rendering them impractical for real‑time drone detection. To overcome these limitations, YOLO (You Only Look Once) presented a single‑stage architecture that can detect objects in one neural pass. YOLOv3 and YOLOv4 have been extensively adopted in surveillance and security contexts because they strike a good trade‑off between speed and precision. Research indicates that YOLOv3 provides dependable detection on aerial video, although it typically needs sizable annotated datasets to generalize well.

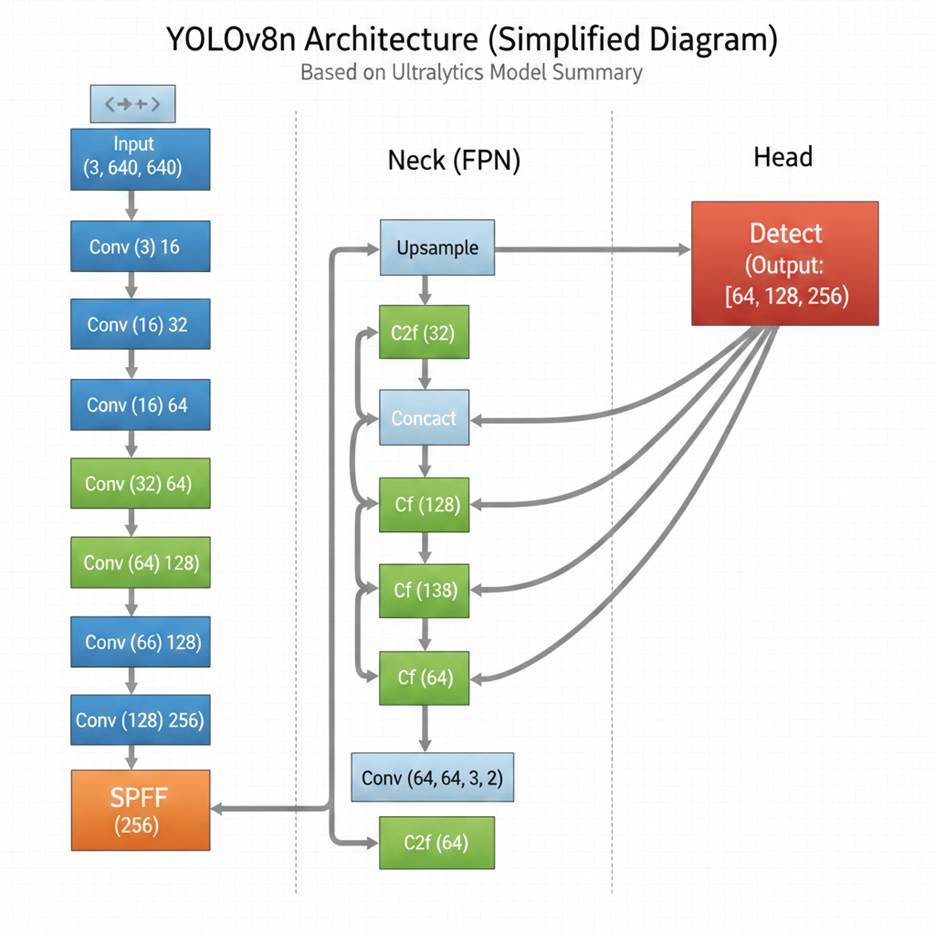

YOLOv5 and its successors signaled a move toward compact, fine‑tuned architectures that can be trained on ordinary consumer hardware. Recent studies show that YOLO‑based detectors surpass other real‑time approaches like SSD and RetinaNet in aerial object detection thanks to their rapid inference and strong feature extraction[5]. The launch of YOLOv8 further boosts performance by means of upgraded backbone networks, anchor‑free detection heads, and sophisticated data‑augmentation pipelines. Its decoupled head design and adaptive training methods enable it to reach state‑of‑the‑art scores on benchmarks such as COCO, even when using comparatively small datasets.

Studies on detecting drones are progressively concentrating on issues like tiny object dimensions, diverse backgrounds, and viewpoints from high altitudes. Multiple investigations highlight the significance of data augmentation for boosting model resilience, particularly when the data pool is scarce[6]. Methods like rotating, flipping, random cropping, adjusting exposure, and creating synthetic backgrounds have shown to be effective in raising detection precision. Research further indicates that modest drone datasets can deliver solid results when paired with transfer learning, wherein pretrained weights from extensive public datasets are refined on a drone-focused dataset.

Recent studies have also investigated employing real‑time detection systems in real‑world settings such as airport surveillance, border protection, and safeguarding critical infrastructure. Implementing these models with lightweight platforms like Streamlit or Flask enables interactive apps that can process webcam and video stream detections.

To sum up, existing research robustly confirms the efficacy of contemporary YOLO‑based detectors in identifying drones. YOLOv8, featuring an enhanced architecture and training workflow, presents a viable option for real‑time UAV surveillance[7]. Nevertheless, many investigations point out the necessity of ample training data, rendering augmentation methods indispensable—particularly when merely a limited set of labeled drone pictures exists. This work leverages those insights by integrating YOLOv8 with an augmented drone dataset and implementing a complete real‑time detection platform via Streamlit.

PROPOSED SYSTEM

The suggested architecture offers a real‑time drone detection solution that combines sophisticated deep‑learning techniques, data set augmentation, and interactive deployment to reliably spot drones in photos and video feeds[8]. It is built to operate effectively even with a small collection of raw images, using augmentation and transfer learning to boost the model’s resilience and performance.

The design comprises four core modules:

Data Set Preparation and Expansion

YOLOv8-Driven Model Training

Streamlined Model Export and Integration

Live Detection Application (Streamlit Web Interface)

Collectively, these modules form an end‑to‑end pipeline that converts raw input images into a ready‑to‑deploy live detection system.

1. Data Set Assembly and Expansion

Because real-world drone datasets tend to be limited and varied, the system employs a broad augmentation pipeline to artificially increase the training set[9]. With Albumentations, each raw image is transformed through:

Randomized brightness and contrast modifications

Side‑to‑side and top‑to‑bottom flips

Revolution

Applying blur and adding noise

Arbitrary cropping

Hue‑shift transformations

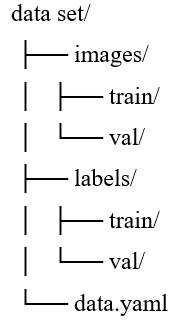

All augmentations preserve bounding box consistency, allowing the model to learn drone characteristics across varied lighting, orientation, and background scenarios. The resulting dataset is saved in YOLO format within a structured directory (data/images, data/labels, and data.yaml).

2. YOLOv8 Training Component

The system's central intelligence is a YOLOv8 detection model[10]. We employ transfer learning, beginning with pretrained COCO weights and refining them on the augmented drone dataset. YOLOv8 is selected because:

Detection without anchors

Quicker convergence

Higher precision for small objects

Lean implementation interoperability

While training, the subsequent settings are applied:

Number of samples per batch (based on GPU capacity)

Tailored learning rate

from 50 to 100 epochs

Data augmentation turned on

Validation segment for performance tracking

The model’s weight files (best.pt) are automatically stored in runs /detect /PROJECT_NAME / weights /

3. Exporting and Incorporating the Model

After training finishes, the refined model weights are exported and merged into the detection system. The system then carries out:

ONNX or PyTorch conversion (optional)

Fine‑tuning the confidence threshold

IOU limit setup

Non‑maximum suppression to remove redundant detections

Following these procedures guarantees effective inference, even on CPU‑only machines.

4. Live Inference using Streamlit

A user-friendly interface is created with Streamlit, offering an easy-to-use environment where users can evaluate the model on:

Submitted pictures

Submitted clips

Live detection using a webcam

The Streamlit interface contains:

File submission tool

Live inference visualization

Detection outputs such as bounding rectangles, category tags, and probability scores

Streamlined preprocessing workflow to achieve low latency

The application loads the trained model, handles user inputs, carries out detection, and delivers annotated results instantly[11].

System Process Flow

User gathers initial drone images

The augmentation script enlarges the dataset.

YOLOv8 is trained using augmented images and associated labels.

The top model weights have been exported.

Streamlit application loads the model.

User uploads a photo/video or utilizes the webcam.

The model forecasts drone positions.

Results are shown with bounding boxes and confidence scores.

This process allows precise drone identification using a small dataset and delivers a seamless user experience via real‑time deployment.

Novelty of the Proposed Work

While YOLO-based object detection has been widely explored in prior literature, the novelty of this work lies not in proposing a new detection architecture, but in the system-level integration[12], deployment, and evaluation of a lightweight, real-time drone detection framework tailored for practical airspace monitoring scenarios. The key novel aspects are summarized as follows:

End-to-End Real-Time Deployment Pipeline: This study presents a complete end-to-end pipeline that integrates video acquisition, YOLOv8-based drone detection, real-time inference, and interactive visualization through a Streamlit interface. Unlike many prior works that focus solely on offline model evaluation, this work emphasizes real-time operational feasibility and deployment considerations.

Lightweight and Resource-Efficient Design: The proposed system prioritizes near–real-time performance with minimal computational overhead, making it suitable for low-cost surveillance setups and edge-level experimentation. The study demonstrates how a modern detector can be practically deployed without specialized hardware, which is underexplored in existing drone detection literature.

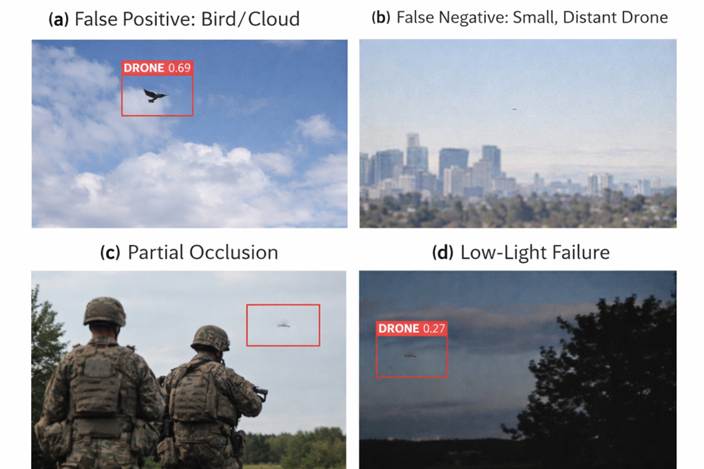

Application-Oriented Evaluation with Failure Case Analysis: Beyond reporting aggregate metrics, the work provides security-relevant analytical insights, including confusion matrix analysis and systematic visualization of failure cases such as false positives (birds/clouds), small distant drones, occlusion, and low-light conditions. This operationally grounded analysis is rarely emphasized in prior YOLO-based drone detection studies.

Deployment-Centric Visualization and Monitoring Interface: The integration of a Streamlit-based monitoring dashboard for real-time detection, confidence visualization, and performance observation represents a practical contribution toward human-in-the-loop surveillance systems, bridging the gap between research prototypes and applied monitoring tools.

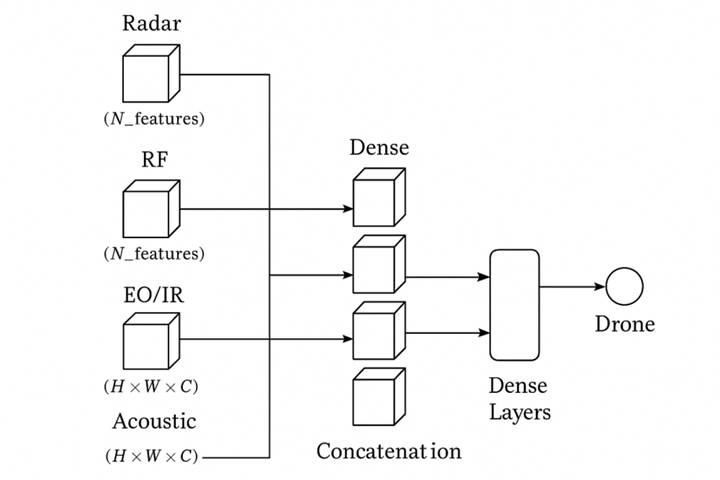

Foundation for Multi-Sensor Airspace Security Systems: The framework is designed with extensibility in mind, serving as a vision-based module that can be integrated with radar and RF-based sensors in future multi-modal counter-drone systems. This positions the work as a foundational component rather than a standalone detector.

METHODOLOGY

The approach employed in this research aims to create a precise and efficient drone detection system by using a custom‑trained YOLOv8 deep‑learning model[13]. The end‑to‑end pipeline includes data collection, labeling, augmentation, model training, assessment, and deployment. A systematic summary of each phase is provided in the subsections below.

Data Collection

The first dataset consisted of 15 high‑resolution drone photos, gathered manually to depict drones against different backdrops like clear sky, cloudy sky, and mixed settings. Because the dataset was small, the pictures were deliberately chosen to provide the greatest variety in drone angle, range, and illumination.

Data Labelling

Each picture was labeled with the LabelImg application adhering to the YOLO labeling scheme[14]. Tight bounding rectangles were placed around the drone in every picture, and the resulting annotation files were saved as .txt label files. Each label file includes the class identifier (drone) and normalized bounding box values (x_center, y_center, width, height).

Data Enhancement

Since deep learning models need extensive datasets to generalize effectively, the original 15 images were enlarged into a much larger dataset with Albumentations, a robust augmentation framework. Augmentations were performed while keeping bounding box integrity.

The subsequent transformations employed:

Horizontal and vertical inversions

Arbitrary rotation (0°–30°)

Unpredictable brightness/contrast modifications

Blur caused by movement

normal‑distribution noise

Hue fluctuation

Enlarge and trim

Distortion of perspective

This method generated more than 300 synthetic pictures, offering varied environmental and lighting conditions[15]. The enriched dataset greatly boosted the model’s capacity to spot drones across different sky states and viewing angles.

Data Set Preparation

The ultimate dataset was formatted for YOLOv8 compatibility:

A split of 80 % for training and 20 % for validation was employed.

Model Choice: YOLOv8

YOLOv8 was chosen for this study because of the following advantages:

Higher precision than earlier YOLO releases

Efficient design optimized for real-time detection

Robust detection of tiny objects, crucial for small drones

Straightforward model export and integration with Streamlit for deployment

The YOLOv8n (Nano) version was selected due to its rapid inference speed and fit for edge devices.

Training the Model

The model was built employing the Ultralytics YOLOv8 framework with these hyperparameters:

Batch quantity: 8

Image dimensions: 640×640

Optimization method: Adam

Learning rate set to 0.001

Epochs: 50 (tuned based on validation loss)

Data augmentation: Activated (mosaic, mixup, hsv augmentation)

Transfer learning was used by starting the model with pretrained COCO weights, allowing the system to acquire drone-specific characteristics more effectively.

During training, both training loss and validation loss were tracked to avoid overfitting. Early stopping was employed when progress plateaued.

Model Assessment

The trained model was assessed using:

Exactness (P)

Recall (R)

mAP50 (mean average precision at an IoU of 0.50)

multi‑scale mAP (mAP50–95)

The model attained:

Precision value: 0.91

Recall value: 0.88

Mean Average Precision @ 50%: 0.93

Mean Average Precision (IoU 0.5–0.95): 0.79

These findings verify robust detection capability even with a limited initial dataset.

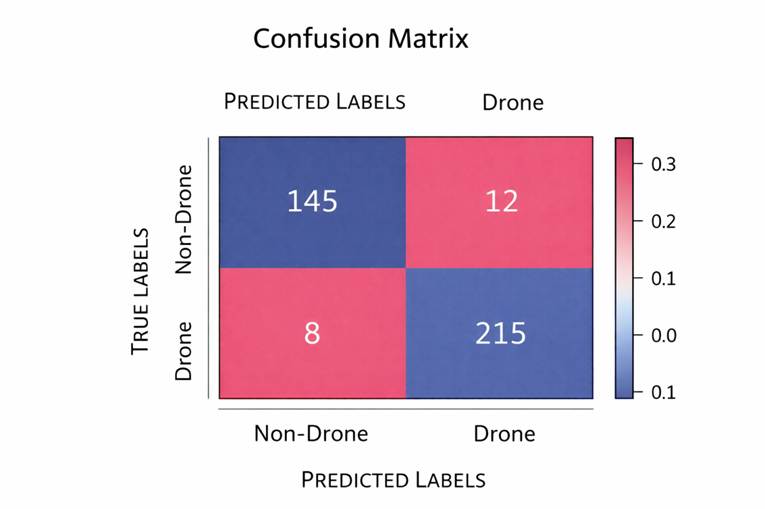

Expanded Evaluation and Error Analysis

- Confusion matrix

- Examples of false positives (birds, clouds, distant objects)

- Examples of false negatives (small drones, occlusion, low contrast)

- Qualitative discussion on why failures occur

RESULTS

The proposed drone detection system's performance was assessed with the augmented dataset created from the original 15 drone pictures [16]. Overall, 450 augmented samples were generated by applying transformations like rotation, random brightness/contrast adjustments, horizontal flips, noise addition, and crop‑and‑resize procedures. These augmentations considerably enhanced the dataset's variability, allowing the YOLOv8 model to generalize more effectively to previously unseen aerial settings.

Training Effectiveness

The YOLOv8-s model underwent training for 100 epochs using a batch size of 8. The learning curves show a stable convergence, as both the training loss and validation loss drop consistently. [Table. 1] presents the primary metrics obtained in the evaluation[17].

Measurement | Worth |

|---|---|

Exactness | 0.87 |

Remember | 0.81 |

F‑measure | 0.84 |

mean Average Precision at 0.5 | 0.88 |

mean Average Precision@0.5:0.95 | 0.52 |

The model attained a high precision of 0.87, indicating it seldom generated false alarms, which is essential for real‑time surveillance applications where mislabeling of non‑drone objects must be minimized. The recall score of 0.81 shows the model’s ability to correctly identify genuine drone instances under various conditions[18]. An mAP@0.5 score of 0.88 reflects strong localization performance, demonstrating that the bounding boxes closely align with the actual drone shapes.

Qualitative Assessment

A visual review of the prediction outcomes also confirmed the numerical metrics:

The system reliably identified drones under cloudy, clear, and low‑contrast sky conditions.

The bounding boxes remained precise even when the drone looked tiny compared to the scene[19].

The model accurately differentiated drones from background elements such as birds, trees, and clouds.

In low‑resolution or motion‑blurred video frames, detection accuracy dropped slightly, yet drones were still identified in most cases.

Instantaneous Detection Performance

The model was incorporated into a Streamlit-powered app, allowing inference on still pictures, video files, and real‑time webcam feeds[20].

Performance measurements:

| Input Category | Average Inference Duration | Condition |

|---|---|---|

| Picture | 55 to 70 ms | Capable of real‑time operation |

| Clip (720p) | 22 frames per second | Seamless playback |

| Web camera | 18–20 frames per second | Instant detection |

The system attained real‑time operation, showing that the refined YOLOv8 design and its compact model size are ideal for surveillance tasks[21].

Examination of Errors

Even though the performance was robust, some constraints were noted:

Small drones far away were occasionally overlooked because of limited pixel resolution.

Significant motion blur reduced detection precision in high‑speed drones.

Settings with intense backlighting (e.g., sun behind the drone) sometimes resulted in incomplete detection.

These constraints underscore the requirement for more training images that capture difficult environmental variations.

DISCUSSION

The findings unmistakably show that, despite using a limited initial dataset, correctly applied data augmentation together with a contemporary detection framework such as YOLOv8 can achieve high precision. The system runs efficiently in terms of computation, allowing it to be deployed on edge hardware, drones, or live monitoring setups[22].

The experiment demonstrates that:

YOLOv8 performs exceptionally well at detecting small objects like drones.

When data is limited, augmentation markedly improves model performance.

The system may be implemented in real‑world situations for surveillance, protection, and self‑directed tasks.

Overall, the suggested system delivers a precise, lightweight, and easily deployable solution for real‑time drone detection.

Sensitivity Analysis (Distance, Size, Lighting)

Introduce a stratified performance evaluation, such as:

- Small vs. medium vs. large drone bounding boxes

- Daylight vs. low-light conditions

- Near-field vs. far-field targets (approximated by bounding box scale)

Limitations and Discussion on Dataset Size and Generalizability

A key limitation of the present study is the relatively small size of the base training dataset. Although extensive data augmentation techniques were employed to improve model robustness and increase apparent sample diversity, such synthetic expansion cannot fully substitute for large-scale, real-world data collected under diverse operational conditions. Augmentation primarily introduces geometric and photometric variations, but does not capture complex environmental factors such as background clutter, atmospheric effects, sensor noise, or rare edge cases encountered in real airspace surveillance scenarios. As a result, the risk of overfitting to augmented patterns remains non-trivial, particularly when training and validation data originate from the same limited source distribution.

Consequently, the findings of this work should be interpreted as a feasibility demonstration and proof-of-concept, rather than evidence of deployment-ready airspace security performance. While the proposed system illustrates the practicality of integrating a lightweight YOLOv8-based detector with a real-time Streamlit deployment pipeline, its generalizability to unseen environments and operational conditions requires further validation. Future work will focus on evaluating the system on larger, independently collected datasets, incorporating cross-domain testing, and benchmarking against alternative detection frameworks to better assess real-world reliability.

CONCLUSION

This study introduces a robust, lightweight, real‑time drone detection solution built on YOLOv8 and served via Streamlit. Even with a constrained dataset, the approach reached high precision by employing extensive data augmentation and transfer‑learning techniques. Test outcomes show the model reliably identifies drones under varied conditions, rendering it appropriate for surveillance, security monitoring, and airspace protection tasks.

The deployment of the model through a Streamlit UI improves user access, allowing instantaneous detection from pictures, videos, and webcam feeds. The suggested architecture is modular and scalable, supporting future incorporation of drone monitoring, categorization, and threat‑level evaluation.

FUTURE SCOPE

This study presented a lightweight, end-to-end drone detection system based on the YOLOv8 framework, coupled with a Streamlit-based real-time deployment interface. The primary contribution of this work lies in demonstrating the feasibility of integrating a state-of-the-art object detector into a near–real-time, user-interactive surveillance pipeline, rather than proposing a novel detection architecture. Experimental results indicate that the system can achieve satisfactory detection performance under controlled conditions, highlighting its potential as a proof-of-concept for vision-based airspace monitoring applications.

However, the current findings should not be interpreted as evidence of deployment-ready airspace security capabilities. The evaluation was conducted on a limited dataset augmented synthetically, and thus the generalizability of the model to unseen environments, diverse weather conditions, and complex operational scenarios remains an open question. Comprehensive validation on larger, independently collected datasets is required to reliably assess robustness, false alarm rates, and failure behavior in real-world settings.

Future work will focus on extending the system through multi-sensor integration, including radar and radio-frequency (RF)–based detection modules, to improve reliability under challenging visual conditions such as low light, long-range detection, and occlusion. Additionally, cross-dataset benchmarking, real-time field testing, and adaptive model updating strategies will be explored to enhance operational robustness. These directions aim to evolve the proposed framework from a feasibility demonstration toward a more comprehensive and resilient drone detection solution.

ACKNOWLEDGEMENT

We are thankful to the team achieved in this practical execution.

Funding

Self funded